As many Canadians will tell you, most Torontonians are a bit skewed. While others might have different explanations, I put it down to our road grid. What we call north in the city is actually some 16.7° west of north.

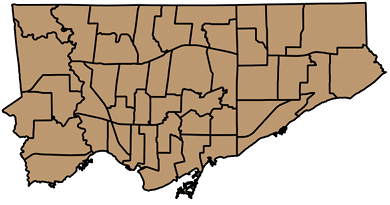

While we do have a perpendicular grid, it’s twisted to the left (steady, Edmontonians). I attempted to work out what the average angle is.

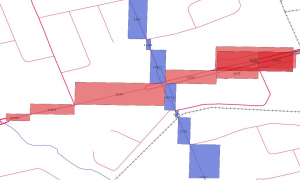

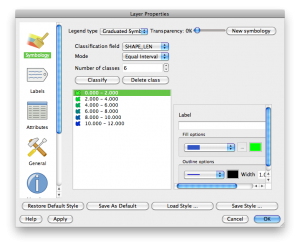

I eyeballed several long straight streets, and picked out representative intersections. Finidng the coordinates of these intersections took a surprisingly long time, as each street has several road sections. To find an intersection, each section on one road has to be queried against each section of the other. It gets there, eventually.

To save the tedium of showing the queries, here are the results. The angles are anticlockwise from the X-axis.

N-S Intersection1 Intersection2 Angle

======== ============= ============= ======

Bathurst King Dupont 16.184

Yonge King Rosehill 16.783

Woodbine Queen Plains 17.049

E-W Intersection1 Intersection2 Angle

======== ============= ============= ======

Danforth Greenwood Main 16.736

Eglinton Dufferin Bayview 16.159

Steeles Dufferin Kennedy 17.194

I was surprised that SpatiaLite didn’t have any angle measurement functions built in, so I had to feed the output through geod. So for instance, for Steeles Avenue, the intersection of Steeles West and Dufferin is at 43.787229°N, 79.470285°W, and the intersection of Steeles East and Kennedy is 43.823636°N, 79.307265°W. Feeding these numbers to geod:

echo '43.787229 -79.470285 43.823636 -79.307265'| geod +ellps=WGS84 -f "%.3f" -p -I +units=m

This spits out three numbers: 72.806 252.918 13727.395. The first is the bearing from the first point to the second; this is the number we’re interested in, and it’s (90-82.806) = 17.194° clockwise from E. The second is the bearing from the second point back to the first. The last is the distance in metres; Steeles is a long, straight street.

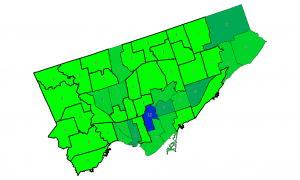

Averaging the numbers for the streets I chose gets 16.684°; just the thing for Torontohenge. For a visual axis, maybe using Steeles’s 17.194° could be better, as it’s a line above the city. You can set the map rotation angle in QGIS’s map composer, and get a better aligned city: