Today, I’m going to describe how I get fairly accurate buffer distances over a really large area.

But first, I’m going to send a huge look of disapproval () to Norway. It’s not for getting all of the oil and finding a really mature way of dealing with it, and it’s not for the anti-personnel foods (rancid fish in a can, salt liquorice, sticky brown cheese …) either. It’s for this:

The rest of the world is perfectly fine with having their countries split across Universal Transverse Mercator zones, but not Norway. “Och, my wee fjords…” they whined, and we gave them a whole special wiggle in their UTM zone. Had it not been for Norway’s preciousness, GIS folks the work over could’ve just worked out their UTM zone from a simple calculation, as every other zone is just 6° of longitude wide.

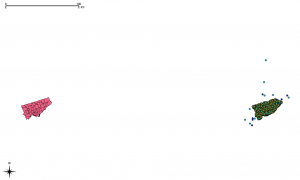

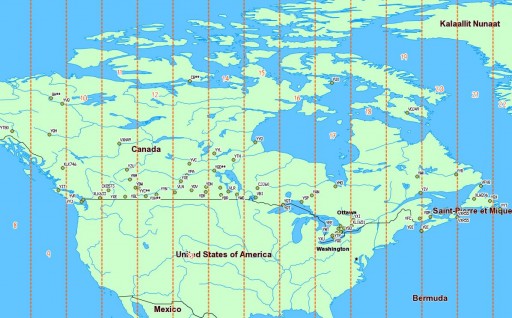

Canada has no such qualms. In a big country (dreams stay with you …), we have a lot of UTM zones:

We’re in zones 8–22, which is great if you’re working in geographic coordinates. If you’re unlucky enough to have to apply distance buffers over a long distance, the Earth is inconveniently un-flat, and accuracy falls apart.

We’re in zones 8–22, which is great if you’re working in geographic coordinates. If you’re unlucky enough to have to apply distance buffers over a long distance, the Earth is inconveniently un-flat, and accuracy falls apart.

What we can do, though, is transform a geographic coordinate into a projected one, apply a buffer distance, then transform back to geographic again. UTM zones are quite good for this, and if it weren’t for bloody Norway, it would be a trivial process. So first, we need a source of UTM grid data.

A Source of UTM Grid Data

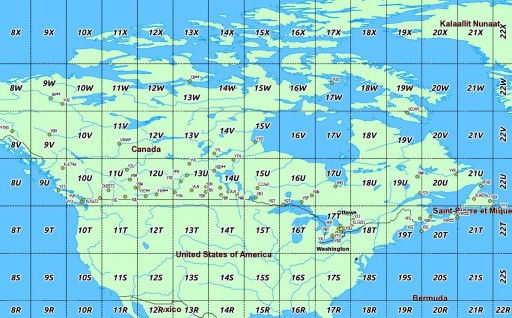

Well, the Global UTM Zones Grid from EPDI looks right, and it’s CC BY-NC-SA licensed. But it’s a bit busy with all the grid squares:

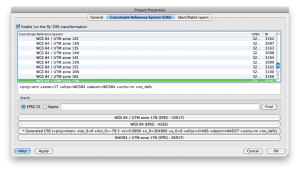

What’s more, there’s no explicit way of getting the numeric zone out of the CODE field (used as labels above). We need to munge this a bit. In a piece of gross data-mangling, I’m using an awk (think: full beard and pork chops) script to process a GeoJSON (all ironic facial hair and artisanal charcuterie) dump of the shape file. I’m not content to just return the zone number; I’m turning it into the EPSG WGS84 SRID of the zone, a 5-digit number understood by proj.4:

What’s more, there’s no explicit way of getting the numeric zone out of the CODE field (used as labels above). We need to munge this a bit. In a piece of gross data-mangling, I’m using an awk (think: full beard and pork chops) script to process a GeoJSON (all ironic facial hair and artisanal charcuterie) dump of the shape file. I’m not content to just return the zone number; I’m turning it into the EPSG WGS84 SRID of the zone, a 5-digit number understood by proj.4:

32hzz

where:

- h is the hemisphere: 6 for north, 7 for south.

- zz is the zone number.

I live in Zone 17 North, so my SRID is 32617.

Here’s the code to do it: zones_add_epsg-awk (which you’ll likely have to rename/fix permissions on). To use it:

- Unzip the Global UTM zones grid.

- Convert the shape file to GeoJSON, using ogr2ogr:

ogr2ogr -f GeoJSON utm_zones_final.geojson utm_zones_final.shp - Process it:

./zones_add_epsg.awk utm_zones_final.geojson > utm_zones_final-srid.geojson - Convert the modified GeoJSON back to a shapefile:

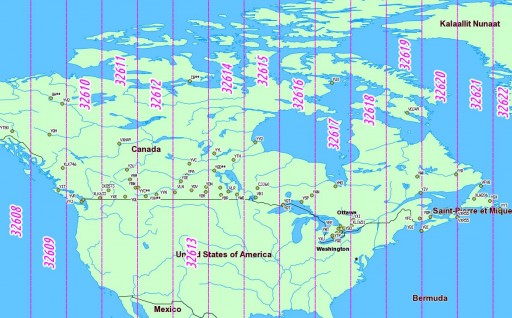

ogr2ogr utm_zones_final-srid.shp utm_zones_final-srid.geojson - Now some magic: create a simplified shapefile with entire UTM zones keyed against the (integer) SRID:

ogr2ogr wgs84utm.shp utm_zones_final-srid.shp -dialect SQLITE -sql 'SELECT epsgsrid,ST_Union(Geometry) FROM "utm_zones_final-srid" GROUP BY epsgsrid;'

And, lo!

So we can now load this wgs84utm shapefile as a table in SpatiaLite. If you wanted to find the zone for the CN Tower (hint: it’s the same as me), you could run:

So we can now load this wgs84utm shapefile as a table in SpatiaLite. If you wanted to find the zone for the CN Tower (hint: it’s the same as me), you could run:

select EPSGSRID from wgs84utm where within(GeomFromText('POINT(-79.3869585 43.6425361)',4326), geom);

which returns ‘32617’, as expected.

Making the Transform

(I have to admit, I was amazed when this next bit worked.)

Let’s say we have to identify all the VOR stations in Canada, and draw a 25 km exclusion buffer around them (hey, someone might want to …). VOR stations can be queried from TAFL using the following criteria:

- the licensee is Nav Canada, or similar,

- the TX frequency is between 108–117.96 MHz,

- the location contains ‘VOR’.

This can be coded as:

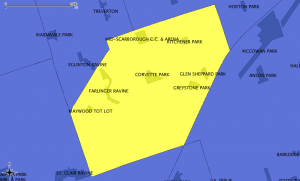

SELECT * FROM tafl WHERE licensee LIKE 'NAV CANADA%' AND tx >= 108 AND tx <= 117.96 AND location LIKE '%VOR%';which returns a list of 67 stations, from VYT80 on Mount Macintyre, YT to YYT St Johns, NL. We can use this, along with the UTM zone query above, to make beautiful, beautiful circles:

SELECT tafl.PK_ROWID, tafl.tx, tafl.location, tafl.callsign, Transform (Buffer ( Transform ( tafl.geom, wgs84utm.epsgsrid ), 25000 ) , 4326 ) AS bgeom FROM tafl, wgs84utm WHERE tafl.licensee LIKE 'NAV CANADA%' AND tafl.tx >= 108 AND tafl.tx < 117.96 AND tafl.location LIKE '%VOR%' AND Within( tafl.geom, wgs84utm.geom );Ta-da!

Yes, they look oval; don’t forget that geographic coordinates don’t maintain rectilinearity. Transformed to UTM, they look much more circular: